Global Experiences with HPC Operational Data Measurement, Collection and Analysis

Sep 1, 2020·

,

,

·

0 min read

·

0 min read

Michael Ott

Woong Shin

Norman Bourassa

Torsten Wilde

Stefan Ceballos

Melissa Romanus

Natalie Bates

Abstract

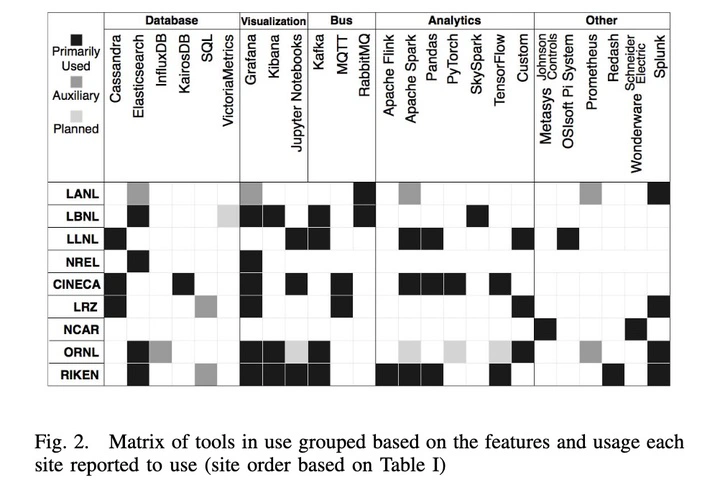

As we move into the exascale era, supercomputers grow larger, denser, more heterogeneous, and ever more complex. Operating such machines reliably and efficiently requires deep insight into the operational parameters of the machine itself as well as its supporting infrastructure. To fulfill this need, early adopter sites have started the development and deployment of Operational Data Analytics (ODA) frameworks allowing the continuous monitoring, archiving, and analysis of near realtime performance data from the machine and infrastructure levels, providing immediately actionable information for multiple operational uses. To understand their ODA goals, requirements, and use cases, we have conducted a survey among eight early adopter sites from the US, Europe, and Japan that operate top 50 high-performance computing systems. We have assessed the technologies leveraged to build their ODA frameworks, identified use cases and other push and pull factors that drive the sites’ ODA activities, and report on their operational lessons.

Type

Publication

EEHPCWG State of Practice Workshop at the 2020 IEEE International Conference on Cluster Computing (CLUSTER)

Operational Data Analytics

High Performance Computing

Energy Efficient HPC Working Group (EEHPCWG)

Summit Supercomputer

Authors

Torsten Wilde

HPC System Software Architect

Torsten Wilde (Ph.D.) is a system software architect at Hewlett Packard Enterprise (HPE). His work spans high volume, high frequency data collection and analytics for IT operations and dynamic system power management. He is part of the leadership team of the Energy Efficient HPC Working Group (EE HPC WG).

Authors

Stefan Ceballos

Data Engineer

Stefan Ceballos is a data engineer in the HPC Core Operations group at Oak Ridge National Laboratory. His background is in monitoring, and his work focuses on designing, deploying, and maintaining the big data platform that enables new insights for the National Center for Computational Sciences (NCCS), home to the Oak Ridge Leadership Computing Facility.

Authors

Melissa Romanus

Data Management Engineer

Melissa Romanus is a data management engineer at the National Energy Research Scientific Computing Center (NERSC) at Lawrence Berkeley National Laboratory, where she is part of the Operations Technology Group. Her work focuses on the ingestion, collection, analysis, and visualization of real-time streaming operational and systems data in HPC data centers. Her research interests span operational data analytics, the architecture of large-scale data lakes, and automating scientific workloads on HPC systems.